62: How to measure product manager performance

Early on in my blog, I wrote one of my most popular posts, “4 key ways to spot a successful product manager”, about measuring the performance of product managers. The problem is that since I wrote that article a lot — and I mean a huge amount — has changed both in product management and my own approach.

I found myself describing to Martin Eriksson at his recent book launch some work I did at the UK’s Ministry of Justice on measuring product manager performance. So here’s an update to my original article from a real-life case study.

If you prefer, you can also watch a talk based on this article.

And if you’d like to benchmark your own skills, take my free self-assessment survey, then send me a message to confirm who you are. I’ll share with you (yes, still for free) a Google doc that benchmarks your results against an average taken from a range of your product manager peers.

In this article #

- Introduction

- A bit of context

- A terrible idea

- Hurray for diversity

- Between compliance and disobedience

- Start with the team

- Peer feedback

- The distance between us

- Takeaways

- Examples

- Survey: good product manager skills and traits

- 1A. What are the most important ‘soft’ skills for a good product manager?

- 1B. What ‘soft’ skills are missing from the list above?

- 1C. Are there any ‘soft’ skills that should NOT be on the list at all?

- 2A. What ‘hard’ skills are required for a good product manager?

- 2B. What ‘hard’ skills are missing from the list above?

- 2C. Are there any ‘hard’ skills that should NOT be on the list at all?

- Survey: product manager self-assessment

A bit of context #

Let me put this in context for you. When I wrote the original post about how to spot a good product manager, my experience up until that point had mostly been in the kind of companies that regarded product management as an evolution of project management — very process-driven. Gantt charts at dawn!

There, the product manager was meant to follow a waterfall process and attempt to make the user interface look like it hadn’t been designed by a database developer. (Even though it had.)

As a result my initial take on measuring how well a product manager is doing was against that backdrop.

Since then, I’ve learned a helluva lot from the dozens of different organisations I’ve worked with in the private and public sectors, many of which are embracing a more up-to-date approach to product delivery (i.e. user-centric, evidence-led, multi-disciplinary teams).

A terrible idea

In the UK’s Ministry of Justice, I was Head of Product for their digital team, during which time I was responsible for 12 product managers of varying degrees of experience. Each product manager worked with her or his own multi-disciplinary delivery team (product management, research, design, development and delivery management).

Misguidedly, the senior management team was clinging on to their attempt to apply a command and control structure to a capable team of autonomous and intelligent people. Not the right approach at all. They had asked me to “stack rank” my product managers (assess their performance and put them in order from best to worst). There’s rarely any other reason for doing this other than a desire to reduce headcount by culling the worst performers.1

It didn’t appear to occur to them — though I pointed it out repeatedly — that this was frankly a terrible idea.

Hurray for diversity

For starters, I knew that each person on my team was already a strong performer. Some of them had been in product management far longer that I had. Others were relatively new product managers, but even considering that were doing just fine, thanks.

Even if it was possible to assess all 12 product managers on a relative, linear scale normalised for their relative levels of experience, my team was perfectly capable of reading between the lines – they’d object so much to the stack rank exercise that they’d probably leave on principle. And I wouldn’t blame them for doing so, because who doesn’t love being devalued and treated as a commodity, right?

Each member of my team had different strengths and weaknesses, different backgrounds and levels of experience, different skill sets and different approaches. Sure, they were all product managers, but they each did product management differently. This was exactly what I needed them to do, because they were all solving different user problems.

Between compliance and disobedience

It was becoming clear that I couldn’t refuse to assess their performance outright. This would lead to my swift removal, then someone less protective of my team than me would simply pick an arbitrary metric, rank my team and fire a few of them. I could serve my team better by treading a fine line between compliance and overt disobedience. I would do some kind of performance assessment, but I’d structure it so that a stack rank was bloody hard to do.

This is what I came up with.

Start with the team

First of all, I sat down with my team and talked through what was going on. I then surveyed them to establish what they felt were the most important soft (emotional) and hard (technical) skills a product manager needed. We used the top ten or so as the dimensions for their assessment. I also asked them how conversant they were with skills from other disciplines they frequently would interact with, such as research, design, content and development.

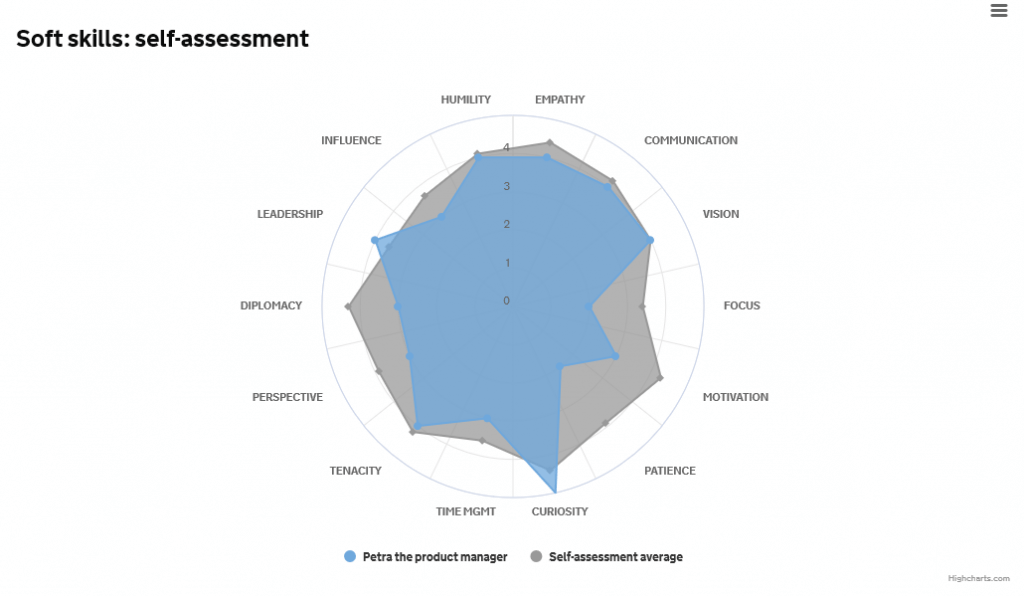

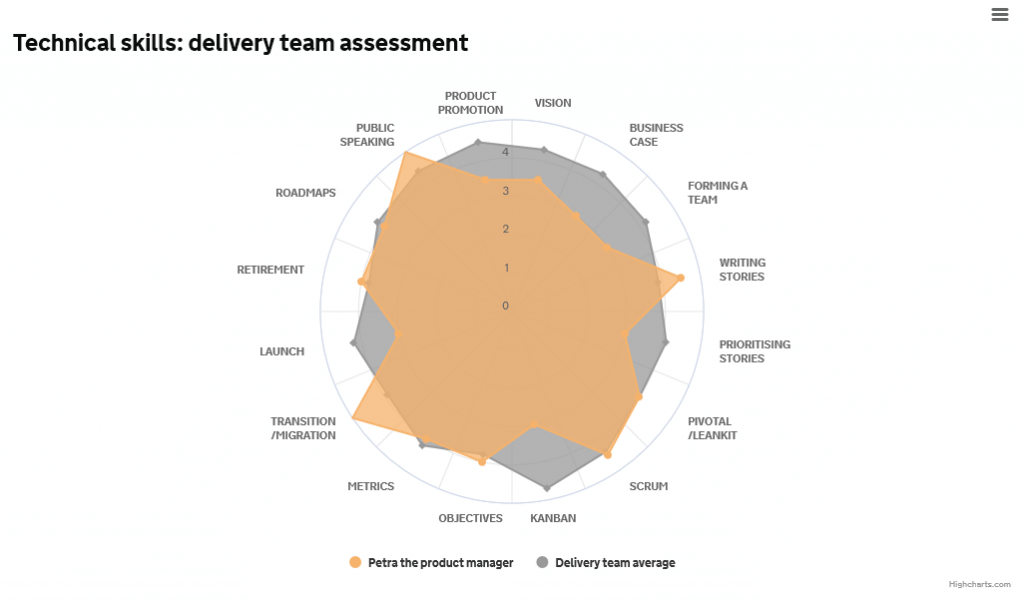

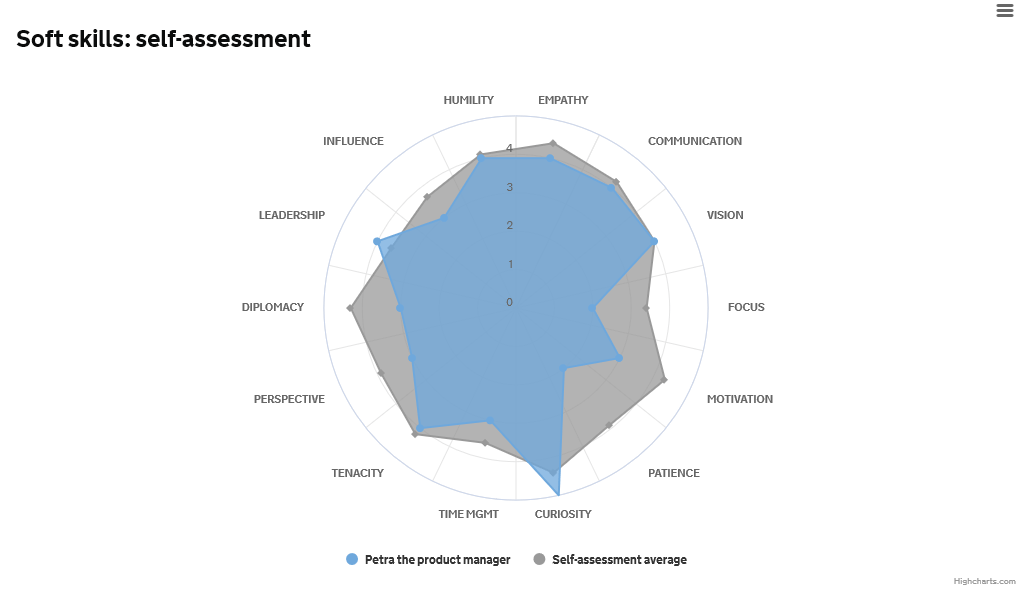

Then I got them to assess themselves individually. They gave themselves a score out of 5 based on how skilled they felt they were in each area. I then plotted the results for each individual on a polar chart, and compared that to the team average.

Immediately it was clear to see where the team felt it was stronger and weaker. After seeing the results, several of my team took the initiative to start filling in the gaps: one person buddied up with another product manager who was more experienced in Kanban, another put themselves forward to do more public speaking, and so on.

Peer feedback

But the really interesting part was yet to come. I asked each of the delivery teams to rate (anonymously) their product manager on the same sets of skills. A product manager needs to be effective in their interactions with their multi-disciplinary team, so I was interested in their team’s subjective perceptions of their product manager. Were they good communicators? Did they inspire?

This exercise again yielded some interesting insights. In some areas the product manager’s self-assessment and their delivery team’s assessment were in agreement. In others, the product manager had rated themselves weaker in an area, while the team thought they were particularly strong – and vice versa. These findings were also worth exploring further.

The distance between us

Lastly, I asked senior management to rate the product managers in the same way I’d asked the delivery teams to. With a couple of notable exceptions, it was clear that senior managers had next to no idea how to answer the questions — they simply weren’t close enough to the product managers to make any kind of assessment.

While this highlighted a failing of the senior management team in what was declaring itself to be a ‘flat organisation’ (yeah, right), it also was a point for improvement in the product managers. Given how reliant they were on having senior management’s support to keep things moving apace, clearly they needed to invest more time into stakeholder management.

Takeaways

So, what do I want you take away from this?

Measuring product manager performance is multi-dimensional — a role requiring multiple skills means you need to assess multiple performance areas

There is no single, absolute measure of performance — people have different strengths and weaknesses (and that’s okay)

You don’t want a homogeneous team — user problems are not all identical so you want a mixture of personalities, experience and abilities on your team to tackle them

Never underestimate the power of a pretty graph — it’s never a waste to spend a bit more time on making your stats easier to consume and tell their story more easily

Examples

I used Highcharts to render the charts. Each product manager had their own page — for their eyes only. Senior management only got to see the aggregated averages in case they got any big ideas.

I’ve got a live demo of the full report page available if you fancy taking a look. Needless to say, for this article the individual’s data has been anonymised (there was no product manager called Petra) and slightly randomised.

The two surveys that I used are here:

- What are good traits of a product manager? (to determine with the team the set of soft and hard skills they should be assessed on)

- Self-assessment survey (for each product manager to score themselves on a scale of 1-5 against each skill)

Similar versions of the self-assessment survey were also used for the product manager’s team and for senior management.

If you have any questions, pop them in the comments below.

The article above is copyright © 2012 — 2024 Jock Busuttil / Product People Limited. All rights reserved.

Survey: good product manager skills and traits

This survey (not the foregoing article) is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

This survey gathers the product team’s collective view on what a good product manager looks like. We then use this in the talent mapping exercise for individuals to identify their learning and development opportunities.

1A. What are the most important ‘soft’ skills for a good product manager?

Pick ALL the options that you think are the most important

- Empathy — the ability put yourself in the shoes of the user to understand their needs from their perspective

- Communication — ability to speak your audience’s ‘language’ and tailor what you say to their needs

- Vision — to see what the ultimate product could and should be, and to enthuse others with that vision

- Focus — attention to detail

- Motivation — to roll up your sleeves and get stuck in; not to wait for people to hand you things on a plate

- Patience — when things don’t move as quickly as you need them to; to be able to calm the frustrations of others

- Curiosity — there is always something you don’t know; always be learning

- Time management — to be effective in your use of time, and make sure you’re working on the most urgent and important thing at any given time

- Tenacity — you will make mistakes and suffer setbacks; not giving up in the face of adversity

- Perspective — to divide your attention between the big picture and the fine detail, between the here-and-now and the long term

- Diplomacy — sometimes you will disagree with people; politeness and diplomacy will mean they keep talking to you afterwards

- Team leadership — you cannot do your job without the help and support of many other people, and your job is to get the best from people; and to lead by good example

1B. What ‘soft’ skills are missing from the list above?

List any traits of a good product manager you feel are missing

1C. Are there any ‘soft’ skills that should NOT be on the list at all?

Pick ALL the options that should not be on the list

- Empathy

- Communication

- Vision

- Focus

- Motivation

- Patience

- Curiosity

- Time management

- Tenacity

- Perspective

- Diplomacy

- Team leadership

2A. What ‘hard’ skills2 are required for a good product manager?

Pick ALL the options that you think are required

- Conducting user research

- Creating user personas

- Writing epics / user stories

- Prioritising epics / user stories

- Paper prototyping

- Using a user story / bug tracking tool

- Defining product vision

- Forming a multidisciplinary team

- Line management

- Data protection / information security

- Technology evaluation

- Participating in Scrum

- Participating in Kanban

- Setting SMART objectives / KPIs / success criteria

- Analysing and interpreting data / metrics

- Usability testing

- Transition / migration planning

- Service design

- Managing product roadmaps

- Writing business cases

- Navigating procurement

- Public speaking

- Writing product copy

2B. What ‘hard’ skills are missing from the list above?

List any skills a good product manager should have that are missing

2C. Are there any ‘hard’ skills that should NOT be on the list at all?

Pick ALL the options that should not be on the list

- Conducting user research

- Creating user personas

- Writing epics / user stories

- Prioritising epics / user stories

- Paper prototyping

- Using a user story / bug tracking tool

- Defining product vision

- Forming a multidisciplinary team

- Line management

- Data protection / information security

- Technology evaluation

- Participating in Scrum

- Participating in Kanban

- Setting SMART objectives / KPIs / success criteria

- Analysing and interpreting data / metrics

- Usability testing

- Transition / migration planning

- Service design

- Managing product roadmaps

- Writing business cases

- Navigating procurement

- Public speaking

- Writing product copy

This survey (not the foregoing article) is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Survey: product manager self-assessment3

This survey (not the foregoing article) is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Based on the skills and traits we as a product team have rated as important, this survey allows us to assess ourselves individually and highlight any areas where we may have hidden talents or opportunities for learning and development. It’s okay not to be a black belt in everything :-)

For that reason, form submissions are NOT anonymous. However, please note that this information will expressly NOT be used for the purposes of comparing one individual to another.

Your respective teams and service managers will also be sent a similar survey so that I can compare what you think about yourselves with what your direct peers think.

The questions are divided into three sections:

1. Soft product management skills

2. Technical product management skills

3. Skills from other disciplines that product managers may be familiar with

— Jock

1. Soft skills

This section relates to ‘soft’ or non-technical skills of a product manager

How would you rate yourself on the following soft skills?

A rating on each skill is required

| Soft skill | Very poor | Poor | Okay | Good | Very good |

|---|---|---|---|---|---|

| EMPATHY — putting yourself in the shoes of the user to understand their needs from their perspective | ◯ | ◯ | ◯ | ◯ | ◯ |

| COMMUNICATION — storytelling, speaking your audience’s ‘language’ and tailoring what you say to their needs | ◯ | ◯ | ◯ | ◯ | ◯ |

| VISION — defining what the ultimate product could and should be, and enthusing others with that vision | ◯ | ◯ | ◯ | ◯ | ◯ |

| FOCUS — attention to detail | ◯ | ◯ | ◯ | ◯ | ◯ |

| MOTIVATION — rolling up your sleeves and getting stuck in | ◯ | ◯ | ◯ | ◯ | ◯ |

| PATIENCE — controlling your own impatience and calming the frustrations of others | ◯ | ◯ | ◯ | ◯ | ◯ |

| CURIOSITY — always eager to learn | ◯ | ◯ | ◯ | ◯ | ◯ |

| TIME MANAGEMENT — effective use of your time and ensuring you’re working on the most urgent and important thing at any given time | ◯ | ◯ | ◯ | ◯ | ◯ |

| TENACITY — not giving up in the face of adversity | ◯ | ◯ | ◯ | ◯ | ◯ |

| PERSPECTIVE — dividing your attention between the big picture and the fine detail, between the here-and-now and the long term | ◯ | ◯ | ◯ | ◯ | ◯ |

| DIPLOMACY — disagreeing in a way that will mean they keep talking to you afterwards | ◯ | ◯ | ◯ | ◯ | ◯ |

| LEADERSHIP — getting the best from people and setting a good example | ◯ | ◯ | ◯ | ◯ | ◯ |

| INFLUENCE — causing people to change direction without direct authority | ◯ | ◯ | ◯ | ◯ | ◯ |

| HUMILITY — placing the contributions of others above your own | ◯ | ◯ | ◯ | ◯ | ◯ |

2. Technical skills

The section relates to technical skills specific to product management

How would you rate yourself on the following technical skills?

A rating on each skill is required

| Technical skill | Very poor | Poor | Okay | Good | Very good |

|---|---|---|---|---|---|

| Defining product vision | ◯ | ◯ | ◯ | ◯ | ◯ |

| Making the business case | ◯ | ◯ | ◯ | ◯ | ◯ |

| Forming a multi-disciplinary team | ◯ | ◯ | ◯ | ◯ | ◯ |

| Writing epics and user stories | ◯ | ◯ | ◯ | ◯ | ◯ |

| Prioritising epics and user stories | ◯ | ◯ | ◯ | ◯ | ◯ |

| Using a user story and bug tracking tool | ◯ | ◯ | ◯ | ◯ | ◯ |

| Participating in Scrum | ◯ | ◯ | ◯ | ◯ | ◯ |

| Participating in Kanban | ◯ | ◯ | ◯ | ◯ | ◯ |

| Setting SMART objectives / KPIs / success criteria | ◯ | ◯ | ◯ | ◯ | ◯ |

| Analysing and interpreting data and metrics | ◯ | ◯ | ◯ | ◯ | ◯ |

| Transition / migration planning | ◯ | ◯ | ◯ | ◯ | ◯ |

| Product launch | ◯ | ◯ | ◯ | ◯ | ◯ |

| Product retirement | ◯ | ◯ | ◯ | ◯ | ◯ |

| Managing product roadmaps | ◯ | ◯ | ◯ | ◯ | ◯ |

| Public speaking | ◯ | ◯ | ◯ | ◯ | ◯ |

| Promoting your product | ◯ | ◯ | ◯ | ◯ | ◯ |

3. Skills from other disciplines

This section relates to other skills outside of core product management that a product manager may be familiar with

How experienced are you in the following skills?

A rating on each skill is required. Note different ratings for this question.

| Technical skill | No experience | Some familiarity only | Conversant (can discuss with specialist) | Some direct experience | Expert |

|---|---|---|---|---|---|

| Conducting user research | ◯ | ◯ | ◯ | ◯ | ◯ |

| Creating user personas | ◯ | ◯ | ◯ | ◯ | ◯ |

| Paper prototyping | ◯ | ◯ | ◯ | ◯ | ◯ |

| Data protection / information security | ◯ | ◯ | ◯ | ◯ | ◯ |

| Data protection / information security | ◯ | ◯ | ◯ | ◯ | ◯ |

| Technology evaluation | ◯ | ◯ | ◯ | ◯ | ◯ |

| Usability testing | ◯ | ◯ | ◯ | ◯ | ◯ |

| Continuous integration / deployment | ◯ | ◯ | ◯ | ◯ | ◯ |

| Writing product copy | ◯ | ◯ | ◯ | ◯ | ◯ |

| Service design | ◯ | ◯ | ◯ | ◯ | ◯ |

| Interaction design | ◯ | ◯ | ◯ | ◯ | ◯ |

| Graphic design | ◯ | ◯ | ◯ | ◯ | ◯ |

| Writing long-form copy | ◯ | ◯ | ◯ | ◯ | ◯ |

| Writing microcopy | ◯ | ◯ | ◯ | ◯ | ◯ |

| Information architecture | ◯ | ◯ | ◯ | ◯ | ◯ |

| Business / process analysis | ◯ | ◯ | ◯ | ◯ | ◯ |

This survey (not the foregoing article) is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.