How UK government digital services gather and use evidence

I gave a talk recently about how I’ve been using data and analytics to guide my decisions in product management. I’ve edited the transcript a little and split it into bite-size parts for your entertainment. This bit is about how UK government digital services gather and use evidence. The last bit was about why we can’t help jumping to conclusions.

A new approach in UK government #

I spent about eight months recently as head of product for the UK’s Ministry of Justice – so, in government – and then a further three months more recently as the head of the product community for UK government as a whole at what’s called the Government Digital Service, or GDS.

And bit by bit since 2012, the whole of government has been moving to a very different approach for creating and managing the products – or services – that it offers people in the UK. And if you think about it, this is everything from applying for a driving licence all the way through to things like booking to visit a friend or relative in prison, or pretty much everything else, everything that government interacts with the public about.

The major revolution in thinking for them was that services exist to serve the needs of people first, not government. I know, it seems obvious, but it really wasn’t until relatively recently.

The (bad) old way #

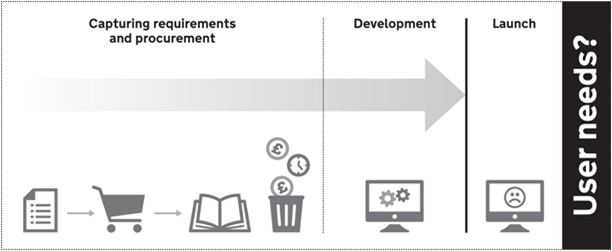

So they had a particular way before, the old way of doing things – and I know this happens a whole bunch in the private sector also – and it goes something like this:

A bunch of senior managers would get together and say “we have a problem”. They would generally decide the problem will be solved by a new CRM or ERP system — or sometimes both. Needless to say, they were usually wrong.

They would then task several middle-ranking managers to spend several weeks or months collating a whole bunch of assumptions, guesses and outright lies into a massive document they call a business case. This would then be used to retrospectively justify the conclusion the senior managers had already reached. And they’d still be wrong.

This hypothetical system would need a laundry list of specifications or requirements to flesh out what it needs to do so that the development team can get building. And again this is largely based on guesswork and results in an even larger set of documents than the business case.

Then some development would happen, which take several times longer than everyone expected, not least because sweeping changes would be needed mid-way through the build. And because the allocated budget has already been exceeded, whole sets of features would be cut out again.

So the resulting product ends up being less capable than the thing it replaced, and largely makes life impossible for the people who actually have to use the thing, but who only got to see it the week before launch in what would laughably be called user acceptance testing.

And so the users point out that – guess what – the thing doesn’t solve their problem, and that they in fact had a very different set of problems to solve, that the CRM or ERP system does nothing to solve them, and that the senior managers had completely missed the point in the first place.

Now I hope that doesn’t sound familiar, but I’m sure we’ve heard of places where that is certainly the case, and certainly in government that was very much how these large IT projects would play out. I’ve seen it a whole bunch of times, not just in government, but in private companies as well.

A better way #

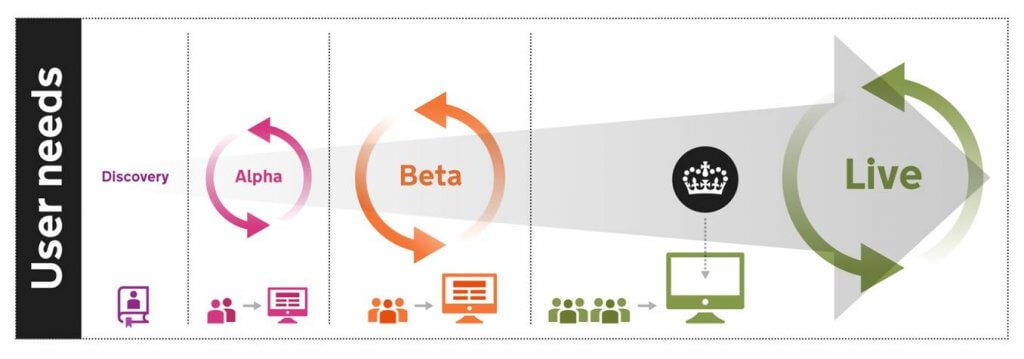

There is, however, a much better way. Instead – and this is the way that government tends to work now – the process starts with user needs, not government needs. So we’re putting the user needs right at the very forefront of our thinking. Whether from direct observation of data and analytics, or user feedback, we go in thinking that users have a particular problem that we can solve.

Then what we do is we go into a process called discovery, and we do this for a few weeks. This a combination of both desk and field-based research with real users to challenge our assumptions, and really to understand the size and shape of the problem, the people who have it, and whether it’s possible to solve, and indeed whether it’s worth solving. There’s no point in spending a million pounds or dollars to solve a ten-thousand-dollar problem.

The discovery team usually consists of a product manager, user researcher, designer and sometimes a developer or a business analyst if we need their particular skills to understand the problem.

It’s a perfectly sensible result for the discovery phase to end with the conclusion that actually what we thought was a problem isn’t a problem, or indeed whether it’s valuable or technically possible to solve. Now in the bad old way of doing things, project teams would only find out this much, much later on in the process.

So that’s the discovery phase, then the alpha phase is all about checking our understanding of the problem by running iterative tests and experiments, again with real users, that demonstrate we can solve aspects of the problem. By doing this, we’re learning more about the problem, and we start to learn about potentially how to solve that problem, about the solution.

At the end of alpha, we should have a pretty clear understanding of the users, their problems and the likely ways we’re going to solve it.

So then we move into beta. And all of these prototypes and experiments we’ve created up until now, we put to one side. Because now we start building the product for real. We want to build it as scalably, as robust as we need it, and as secure we need it to be, potentially – in the case of government – to be used by several million users.

The big difference here is that throughout beta, even though we’re not finished building the product yet, we’re still using the product out in the wild – we’re putting it in front of real users, and real users are using that product to solve their problems. They could be using it to apply for their driving licence or to renew their passport or things like that. And the reason why we do this is because it gives us this wealth of analytics and feedback from user testing and from people actually using the product, that helps us adjust and tweak the product to keep us on the right track.

When we’re able to demonstrate throughout this process that users are able to solve their underlying problem – whether it’s “I want to be able to drive a car, so I need a driving licence” or “I want to be able to travel internationally, so I need a passport” – then when they’re able to do that, then we stop adding new features. We can stop building things and we can shift our focus from building new stuff to more continuous improvement – small tweaks as needed to squash bugs or improve usability.

And then the majority of the team moves off onto the next major problem to solve.

The thing is that throughout the entire process, we’re running experiments, we’re gathering data, we’re doing analytics with real users, the actual people who will actually be using the product or service.

And it’s by doing this that we force ourselves to put aside our assumptions and engage our analytical part of the brain – evidence trumps opinion every single time.

Experiments aren’t scary #

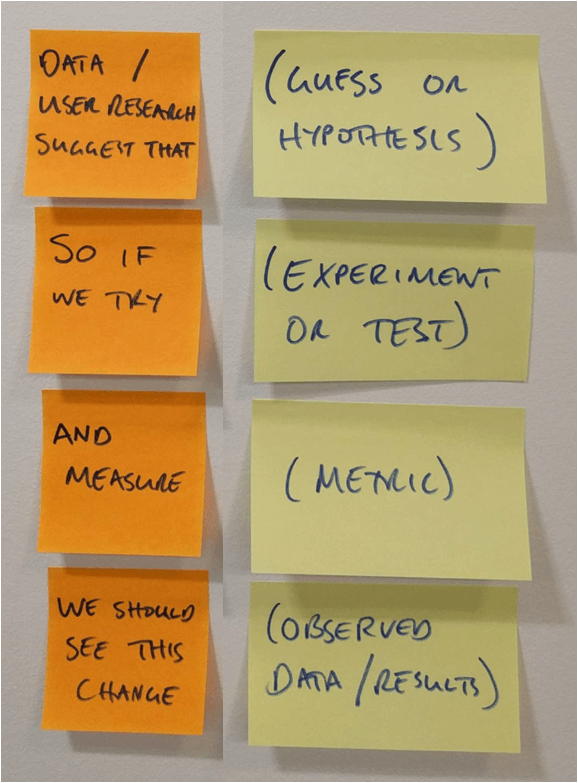

Experiments don’t have to be daunting or scary. Here’s a very quick template you can use. An experiment can be very quick. One example was in the Ministry of Justice, one of my product managers and his lead developer were having a pretty heated argument about whether the users would understand what a particular feature did.

So rather than listen to them arguing for the rest of the afternoon, I packed them both off with paper prototypes to a nearby cafe and told them not to come back until they’d spoken to 20 people. And when they came back about two hours later, the product manager grudgingly reported back that 18 of the 20 had proven him wrong. And this was a great thing, because now he and his lead developer were working with evidence, not opinion.

Any experiment you’re running follows this template. You’ve got some user research or evidence that suggests something you believe to be the case, your hypothesis or your guess. So if we trying running a particular experiment or test – in this case going down to a cafe and asking people if they understood what this particular feature did – and measure the number of people who did or didn’t understand it, then we should be able to see whether or not people do understand that feature. And in this case, we had the overwhelming result that 18 of the 20 didn’t understand this feature, and so as a result the product manager was actually wrong.

So you can be doing this kind of thing all the time, it doesn’t have to be a big elaborate experiment with hundreds or thousands of people, you can do it relatively quickly for any questions you need to have answered.

Put users first #

The next key learning for you is: put your users first. Don’t put the needs of your organisation before those of your users. Absolutely take them [your organisation’s needs] into account, but put your users first.

Next time: the benefits of open and transparent data